Why cancer screening has never been shown to “save lives”—and what we can do about it

BMJ 2016; 352 doi: https://doi.org/10.1136/bmj.h6080 (Published 06 January 2016) Cite this as: BMJ 2016;352:h6080

Including all mortality

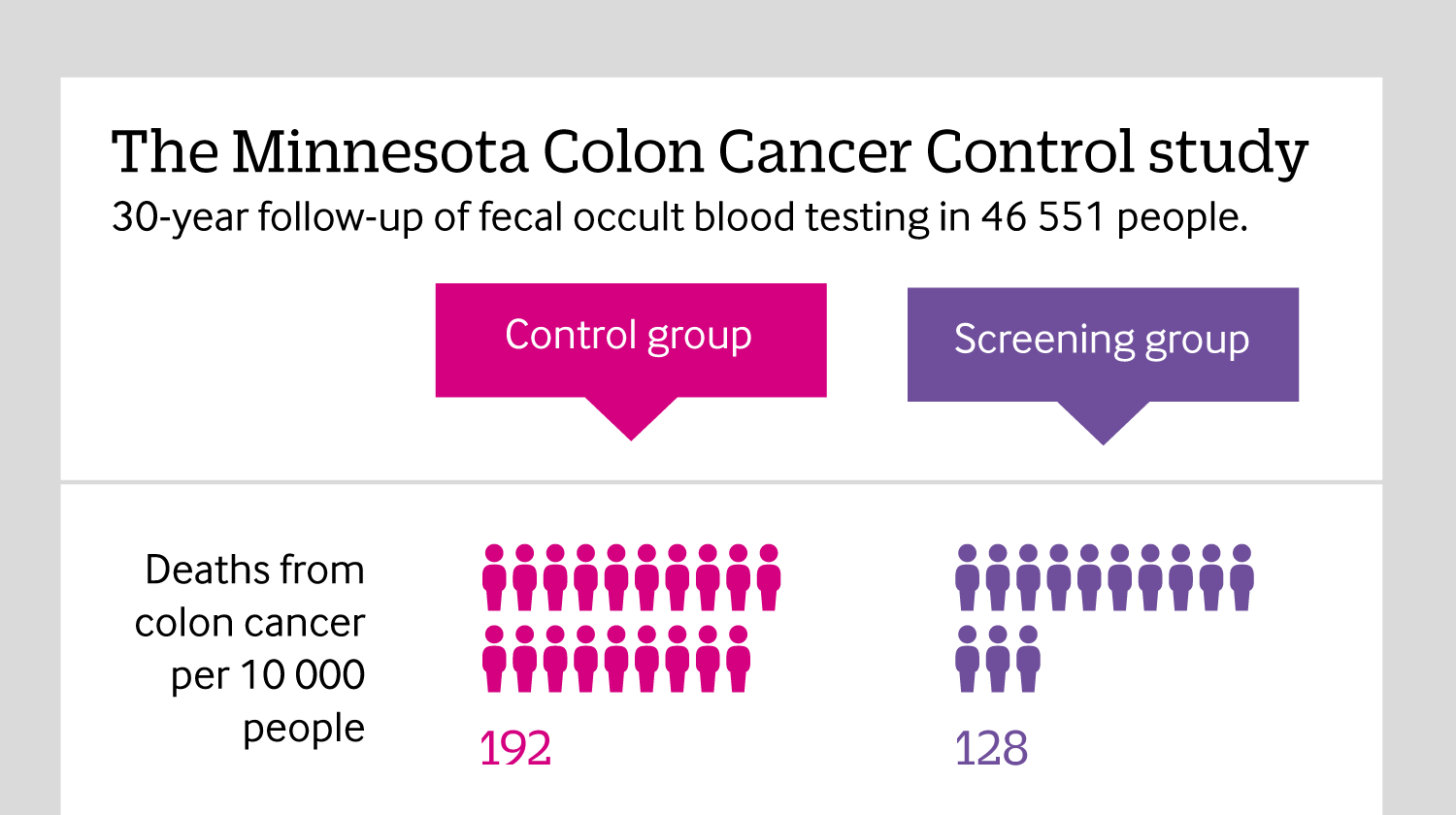

Click here to see an infographic, explaining why reporting all causes of mortality in cancer screening trials is so important.

All rapid responses

Rapid responses are electronic comments to the editor. They enable our users to debate issues raised in articles published on bmj.com. A rapid response is first posted online. If you need the URL (web address) of an individual response, simply click on the response headline and copy the URL from the browser window. A proportion of responses will, after editing, be published online and in the print journal as letters, which are indexed in PubMed. Rapid responses are not indexed in PubMed and they are not journal articles. The BMJ reserves the right to remove responses which are being wilfully misrepresented as published articles or when it is brought to our attention that a response spreads misinformation.

From March 2022, the word limit for rapid responses will be 600 words not including references and author details. We will no longer post responses that exceed this limit.

The word limit for letters selected from posted responses remains 300 words.

Mr. Kivela notes that in our description of the Danish Lung Cancer Screening Trial we used the phrase "trend toward significance." His point about that phrase is well taken and he is correct.

We mentioned this trial to show that when an appropriate comparator was used for CT screening for lung cancer (e.g. no intervention), a randomized trial did not show a benefit. The absolute rate of death was greater, but this was not significant.

I thank Mr. Kivela for making this point. At the same time, and I think Mr. Kivela will agree, this does not alter the message of our analysis that urges doctors to be frank with healthy people about cancer screening: that no test has been shown to improve overall mortality, and as such people should not be told that screening has been proven to "save lives."

Competing interests: No competing interests

All the above mentioned Colleagues rushed to praise cytology based cervical screening, and arbitrarily exclude it from the embarrassing conclusions of this analysis, implying that Pap test demonstrated cardinal significance in detecting early precancerous lesions and minimal cervical cancers.

This is not the case, though, after studying recent sensitivity and specificity evidence, compared to VIA colposcopy.

This 2 minute technique during routine clinical examination, is very cheap, does not require an attached Cytology Laboratory, uses naked eye or simple magnifying lense inspection, can even be performed by briefly trained nurses, effectively detects the same amount of CIN II/III lesions, and can be combined with immediate point of care treatment options.

If a nurse in rural India, in a low resource setting, manages to detect the same precancerous cervical lesions compared to trained Consultant Gynecologists, Specialist Colposcopists, Histopathologists, Cytologists, Consultant Oncologists, midwives, all part of a Western National screening program, then everybody realizes that the totem value of such a screening program is questionable.

References

http://www.bmj.com/content/346/bmj.f3935/rr/651501

http://www.ncbi.nlm.nih.gov/pubmed/26014371

http://www.ncbi.nlm.nih.gov/pubmed/25366674

http://www.ncbi.nlm.nih.gov/pmc/articles/PMC3921262/

http://www.ncbi.nlm.nih.gov/pubmed/24716265

http://static.www.bmj.com/sites/default/files/response_attachments/2013/...

http://static.www.bmj.com/sites/default/files/response_attachments/2013/...

Competing interests: No competing interests

I agree with Prasad et al on their analysis (1). However, the authors stated that ”there was a trend towards higher mortality in the screened group (61 deaths, 2.97%) compared with the control group (42 deaths, 2.05%; P=0.059).24”.

The use of phrase trend towards is inappropriate as has been also pointed out in this very same journal. If one gets a two-tailed P-value of 0.06 and doubles the sample size, there is around 22% chance of getting a P-value higher than 0.06 (2). Contrary, if one gets a P-value of 0.01 and doubles the sample size, there is approximately 14% chance of getting a P-value higher than 0.01 (2).

I conducted a simple electronic search whether the authors of the referenced study (namely ref. 24 in Prasad et al) used the word trend in their article in regard to the aforementioned result (P=0.059). This was not the case. However, the small sample size was used as an excuse for this statistically non-significant difference in all-cause mortality in the discussion (3).

The possibility that observed P-value might fluctuate either right or wrong direction (depending on one’s view) should be kept in mind.

1. Prasad V, Lenzer J, Newman DH. Why cancer screening has never been shown to "save lives"-and what we can do about it. BMJ 2016; 352: h6080.

2. Wood J, Freemantle N, King M, Nazareth I. Trap of trends to statistical significance: likelihood of near significant P value becoming more significant with extra data. BMJ 2014; 348: g2215.

3. Saghir Z, Dirksen A, Ashraf H, et al. CT screening for lung cancer brings forward early disease. The randomised Danish Lung Cancer Screening Trial: status after five annual screening rounds with low-dose CT. Thorax 2012; 67: 296-301.

Email: jesper.m.kivela@helsinki.fi

Competing interests: I like statistics.

Dear Editors

Following Dr L Sam Lewis' rapid response, I wish to add my support to his assertion of what a reasonable person (myself included) would want to know when considering undergoing a test.

What does it do?

How is it done?

What are the possible results and what do they mean?

Is there any complications from doing (or not doing) the test?

How will the results affect my life short and long-term?

What are the risks that a result will lead to further treatment/tests which has a higher complication rate (and associated mortality or morbidity) without improving my life?

The facts are:

If the screening programs set out to detect cancers, most (if not all) of them will achieve this.

If the tests set out to detect cancers early enough to reduce deaths from specified cancers (disease-specific mortality), many but not all will achieve this.

However, if the screening tests aims to reduce all mortality/morbidity within the test population by early detection of cancer (or not), few screening program will be able to claim this.

Detractors claim that many of the trials out there does not have the power or sensitivity to answer the last question of all cause mortality, and that the base-line population mortality rate will mask the effects of the screening program on a proportionally smaller number of the population with the cancer.

The controversy behind screening for prostate cancer exposes the very issue of complications of investigations and treatment (surgical and medical) and the relation to disease-specific and all-cause mortality rates, thus giving permission many clinicians to ask similar questions about other programs for due diligence.

Keeping in mind that the average punter would essentially ask (in various form and phrases) if undergoing a screening test will reduce his/her chances of premature death (be it from the cancers and all other causes), this is a question that researchers cannot ignore, and also the kind of question a good meta-analysis sets out to answer by pooling suitable study populations appropriately.

I suspect that there are enough people out there who feel that if the screening tests does not make any difference to all cause mortality then they would not consider having these tests. As a result the risk-benefits cost-effectiveness of the screening programmes will be affected significantly.

Similar to Dr Lewis, this issue reminds me of the classic study of CAST (Cardiac Arrhythmia Suppression Trial) in early 1990s when it is known that class I antiarrhythmic agents suppress premature ventricular complexes (PVC) and that PVC post myocardial infarction (MI) is a leading cause of death, so logically it is expected that class I antiarrhythmic agents will reduce post MI deaths. CAST (1) and CAST II prove that class I antiarrhythmic agents actually increases post MI cardiac deaths (both arrhythmias and non antiarrhythmia) and overall all cause mortality compared to placebo. Imagine telling patients that "this drug will prevent the heart from fluttering but will also increase your risk of premature death"!

It is reasonable to expect that when the person on the street asks how the test will affect him/her life, this question extends beyond the the realms of "disease-specific mortality" to long-term morbidity and premature mortality (from all cause). Claims that studies available so far does not have to ability to answer these questions, should not be the reason to ignore this important line of inquiry. Surely after years of registry data collection, screening programme can relook at their database to try to address this, and if not, then meta-analysis can be applied to provide a meaningful answer.

Just because we can't answer the real questions now does not mean we should stop asking the right questions.

Prudens quæstio dimidium scientiæ: Asking the right questions is half the science (Roger Bacon 1958)

Reference:

Competing interests: No competing interests

No amount of statistical sophistry from Professors Sasieni and Wald will alter the simple request from the competent patient to be provided with an estimated chance of dying with and without screening. Indeed, this is a reasonable request for any offer health benefit. Kearns does himself a disservice by arguing that we all have a 100% chance of dying, and thereby dismisses the sound argument for all-cause mortality. How shall we know if screening ( and its attendant interventions ) kills more people than it saves ? All I want is a simple body-count, within any specified time-frame ( the duration of the screening RCT will suffice ). Did more patients die in the control group, or the screening group , in the x years of the study? Will I have a greater or lesser chance of dying in the next 5 years if I accept screening ?

So far as I can tell, meta-analyses of RCTs show that neither breast screening nor Aortic aneurysm screening makes any body-count difference, and nobody knows what difference cervical screening makes.

Sasieni, Wald, Kearns, Dickinson, Hope et al should look back at the many trials, eg Clofibrate, which showed that deaths from ischaemic heart disease were significantly reduced, but more people died in the control group. Eventually, thankfully, the drug was withdrawn.

Body count - easy to measure, easy understand. Any cancer screening trial which fails to record body count calls into question all its other counts.

Competing interests: No competing interests

In the article about screening and all-cause mortality, Prasad et al(1) identify two reasons that cancer screening might reduce disease specific mortality without significantly reducing all-cause mortality, yet there is a third reason which they do not discuss: biased cause-of-death determination. Accurate cause-of-death determination is highly problematic in older persons with preexisting comorbidities which worsen during cancer treatment. This is compounded by the fact that attempts to capture screening-caused deaths require non-blinded cause-of-death determination. If ambiguous-cause deaths are more frequently labeled as off-target mortality (i.e. comorbidities) in the screen group versus being labeled as target-mortality in the control group, then the entire effect of decreased disease-specific mortality may be an artifact of bias. Readers should consider this when assessing the validity of decreases in disease-specific mortality in clinical trials of screening, especially when there is inconsistency with all-cause mortality.

In clinical trials, disease-specific mortality suffers from both an Achilles heel and a Catch-22. The Achilles heel is the reliance upon accurate cause-of-death determination. While death is simple and dichotomous, cause-of-death is complex, non-dichotomous, and frequently multi-factorial. Subsequently assessing the presence or absence of a specific cause-of-death is dichotomization of a notoriously inaccurate, non-dichotomous determination. Use of a blinded determiner with a large enough sample size should mitigate the inaccuracies (by equally distributing them between the two groups), but blinding disallows capture of intervention-caused off-target deaths, which then biases the outcome in favor of the intervention. This is the Catch-22 of disease-specific mortality in a clinical trial: blinding biases by missing intervention-caused off-target deaths, but non-blinded is biased prima facie. Therefore we should not be surprised that decreases in disease-specific mortality are frequently inconsistent with all-cause mortality.

Do the authors think that it is valid to consider trial outcomes as diagnostic tests used to detect the presence or absence of an effect (i.e. fewer deaths), and then analyze these outcomes within the framework of sensitivity and specificity? From this perspective, the authors would seem to be arguing that disease-specific mortality has high sensitivity but may have low specificity. On the other hand, all-cause mortality has low sensitivity (due to the large sample sizes required to achieve statistical significance) but has attributes which give it high specificity (it is truly dichotomous, simple to accurately determine, and it is the definition of “fewer deaths”). Analyzing the issue from this perspective would distill it down to two questions: 1) What is the false-positive rate for disease-specific mortality in clinical trials? 2) Is that rate acceptable?

1. Prasad V, Lenzer J, Newman DH. Why cancer screening has never been shown to “save lives”—and what we can do about it. BMJ. 2016 Jan 6;352:h6080. doi: 10.1136/bmj.h6080.

Competing interests: No competing interests

Prasad accepts that all-cause mortality is an insensitive outcome in the assessment of the efficacy and harm arising from medical screening programmes, but says it is necessary. The use of insensitive and uninformative endpoints in clinical trials is bad science. It would delay and possibly prevent effective programmes from being adopted (and prolong trials of ineffective interventions). Disease specific outcomes need to be examined.

All-cause mortality will generally be dominated by causes of death that are unrelated to the screening and any intervention that follows, so that any benefit (or harm) from screening will be concealed. This is not to say that a direct assessment of all-cause mortality should be ignored. It may indicate possible benefit or harm but failure to reveal either does not exclude the existence of clear and sufficient evidence of benefit or harm. It is the insensitivity (and lack of specificity) of all-cause mortality as an outcome that is the problem, not the outcome itself.

Competing interests: No competing interests

As Muris Cabaravdic correctly points out, everybody eventually dies. Hence it seems puzzling to criticise screening programmes for not keeping people alive indefinitely. Instead we may ask if screening programmes keep people alive longer. For cancers diagnosed in England, the National Cancer Intelligence Network have done some wonderful work examining the relative survival of individuals broken down by how their cancer was diagnosed (http://www.ncin.org.uk/publications/routes_to_diagnosis) The data is freely available (in workbook 'a'). For female breast, colorectal , and cervical cancer 3 year relative survival estimates following a screening diagnosis are 99%, 93% and 96% (respectively). Taking a weighted average of the non-screening diagnosis, the estimates are 87%, 60% and 71%. These figures suggest that screening for cancer can help people to live longer.

There are potential problems with screening, such as over-diagnosis, the increase in healthcare costs, and false-positive results that may cause unnecessary morbidity/mortality and psychological distress. Economic evaluations allow us to weigh-up the benefits and harms of screening, using the best available evidence, to decide if they represent value for money and should be implemented (see, for example: http://gut.bmj.com/content/56/5/677.short).

In summary, for many cancers there is evidence that screening reduces cancer-specific mortality, and that it keeps people alive for longer. It would be wonderful if screening programmes stopped people dying forever (and so reduced all-cause mortality), but their failure to do so should not be seen as a failure.

Competing interests: No competing interests

Response to Dr. Rietsema & Response to Dr. Sasieni and Dr. Wald

* Response to Dr. Rietsema

Dr. Rietsema raises an important point regarding the importance of morbidity. If an intervention performed on healthy people had no impact on mortality, but improved quality of life, then there would be no dispute, and it would be widely endorsed. If there were randomized trial evidence showing that any screening testing did this, we would be grateful to see it.

Without this evidence, unfortunately, Dr. Rietsema’s argument relies on speculation. One can easily, and perhaps more correctly, speculate the opposite way that on balance quality of life is worse. Consider the tremendous harms of screening, including false positives and over diagnosis, which we detail. Also consider that in many nations—such as the US—precancerous lesions like DCIS (found via screening) are treated very aggressively, with many patients receiving mastectomy, radiation, double mastectomy, or other invasive care [1]. Finally, improvements in the treatment of adjuvant breast cancer have likely reduced the benefits of screening by curing some women with palpable tumors who would have had worse outcomes in prior eras.

At the end of the day, trying to argue about quality of life in either direction is speculative and without careful measurement and proof not the sort of claim that can justify widespread screening.

I agree that future trials should capture this.

* Response to Dr. Sasieni and Dr. Wald

Dr. Sasieni and Dr. Wald note that we make a “well recognized fallacy in preventive medicine,” which they and their “colleagues regularly teach on.” Moreover, they find it “surprising to see [our article] appearing in the BMJ.”

Unfortunately, these comments are empty persuasive tactics. In the former case, they appeal to their authority (regularly teach on); and in the latter case (surprising to see) they insinuate that our article does not meet the standards or focus of the Journal. Yet, given that the BMJ has published many articles advancing the conversation on cancer screening, including ones entitled “Should we use total mortality rather than cancer specific mortality to judge cancer screening programmes? Yes” [1] and “Harms from breast cancer screening outweigh benefits if death caused by treatment is included” [2], I think it is not surprising the BMJ published our article, and in line with the evolution of thought on this topic.

I wish to make a plea that as we continue to debate cancer screening, we do our very best to stick to the facts, and facts alone. It is clear this topic provokes strong emotions from many who consider it, but it is a disservice to our profession to attempt empty persuasion. Ironically, this is an objection we have with cancer screening campaigns themselves.

The content of Dr. Sasieni and Dr. Wald’s argument is that it is difficult to show an overall mortality benefit with screening. Indeed, we agree it is difficult, yet not impossible, and we cite others who have calculated the precise sample size needed to do so.

Furthermore, they argue “preventing all cases of polio has an imperceptible effect on total mortality yet it would be absurd to regard the value of polio vaccination as unproven.”

Here, the analogy is faulty. The reality is no cancer-screening program is anything like the Polio vaccine (eliminating all cases). Most screening tests have effects on disease specific mortality that are marginal at best, highly disputed, and absent for some interventions in meta-analysis. Most screening tests have significant and poorly documented harms. Screening's effects on overall mortality are entirely uncertain. When it comes to cancer screening, one may legitimately wonder if the off target harms and deaths outweigh the benefits. One may legitimately wonder if, as a result, overall mortality is improved. Screening is not comparable to the polio vaccine. For these reasons we believe it is both necessary and ethical to study overall mortality. For the time being, at a minimum, healthy people deserve to be informed of these facts, and healthy people should not be misleadingly told screening has been proven to ‘save lives.’

References

[1] http://oncology.jamanetwork.com/article.aspx?articleid=2427491

[2] http://www.bmj.com/content/343/bmj.d6395.long

[3] http://www.bmj.com/content/346/bmj.f385

Competing interests: No competing interests

Re: Why cancer screening has never been shown to “save lives”—and what we can do about it

"To obtain a 95% confidence interval of +/-1% for demonstrating a 3% decline in all-cause mortality with cancer screening, a study size of 596,200 persons is required" a German analysis concluded.

Few clinical trials have followed that many people for decades.

Reference

https://www.ncbi.nlm.nih.gov/pmc/articles/PMC6121088/

Competing interests: No competing interests